Last updated on 5.5.2024

Unnecessary pages - What causes the problem?

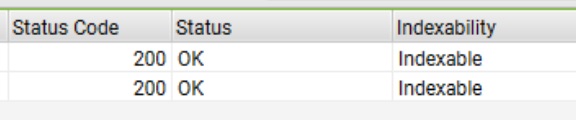

WordPress sites that use the plugin Yoast SEO have SEO some issue on archive pages.

The plugin automatically creates every archive page internal link to the “next page” (/page/2/)

as a result of a pagination tag (rel=”next” and rel=”prev”).

How i solved it?

Unfortunately, I didn’t find some way to remove that function from archive pages (without removing the plugin)

but I found a solution

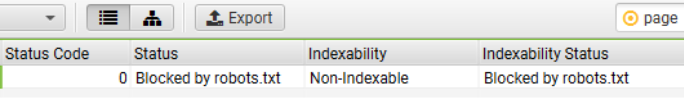

I blocked it in the Robots.txt file and made it Non-Indexable:

You need to add following line to the file:

Disallow: /Archive-Name/page/

in my case: Disallow: /he/page/

and it will fix it for the Google search console.

Another way to block it (if there are more than 1 slug)

we also can block “feed” pages.

remember: “*” = everything

so be careful not to block pages or slugs that contain the word “page”

(don’t do just: “*page*”)

Disallow: */page/

In my case it will be better:

Disallow: */he/page/

Example of a robots.txt

User-agent: *

Disallow: /wp-admin/

Disallow: /wp-includes/

Disallow: /wp-content/plugins/

Disallow: /?s=

Disallow: /page/*/?s=

Allow: /wp-content/uploads/

Allow: /wp-admin/admin-ajax.php

Allow: /*.js$

Allow: /*.css$

robots file for woocommerce sites:

“Disallow: /” = block website from some crawler (of other search engines)

User-agent: *

Disallow: /wp-admin/

Disallow: /wp-includes/

Disallow: /*add-to-cart=*

Disallow: /cart

Disallow: /checkout/

Disallow: /my-account/

Disallow: /wp-content/plugins/

Disallow: /?s=

Disallow: /search/

Allow: /wp-admin/admin-ajax.php

Allow: /wp-content/uploads/

Allow: /*.js$

Allow: /*.css$

User-agent: BLEXBot

Disallow: /

User-agent: Cliqzbot

Disallow: /

Sitemap: https://domain.com/sitemap_index.xml

We can also block a specific file type:

Disallow: /*.html$